Working on a VUE concept map of the fieldTest simulation

Posted 22 Jun 2013 / 0Jennifer Verdolin, Dylan Moore, and I created the fieldTest simulator several years ago. This individual-based simulation allows virtual animals with the potential to form social groups that defend territories to interact on landscapes containing different patterns and abundances of resources, and is part of my larger research into group territorial behavior. We presented our results at the 2009 and 2010 Ecological Society of America meetings, but it is now (finally!) time to write this work up.

A major challenge of creating individual-based models (IBMs) is their complexity: rather than being governed by a few simple and elegant equations (like classic population ecology models), IBMs are more like machines with many moving parts. They run as computational simulations rather than operating in the truly abstract world of math. They are implemented in computer code, which is notoriously difficult to “read for meaning” if you are not a computer. Of course those of us who create IBMs believe that their complexity is tied to their value: unlike differential equations, which generally must make extraordinary assumptions in order to implicitly represent a system, IBMs can make explicit the mechanisms and processes that we suspect make the system work. So the burden of IBM complexity is seen as worth shouldering for the sake of ecological and evolutionary realism.

If making complex algorithmic IBMs is tough, communicating them is even more difficult. Whereas a modeler using only equations to represent a system can lay out the essence of the model in just a few lines, the modeler employing an IBM has to lay out every last (meaningful) detail of the computer program that performs the simulations. This can be difficult simply from the perspective of organization: how do you present an IBM in a manner that lays out what your model does in a way that is user-friendly, logical, and comprehensive? How do I deal with detail? Some readers will need to understand every last detail of the model, whereas others will want to just understand the basic structure, so how do I make both readers happy?

Luckily, some prominent IBMers have come up with the ODD protocol (Grimm et al. 2006, 2010), which creates a standardized method for portraying IBMs. ODD stands for “Overview, Design Concepts, Details”, and if a modeler addresses all of the elements of the protocol, the reader should be provided with a description that is both comprehensive and hierarchical in its presentation of information. This hierarchy is a bit like the standard newspaper article format that I learned about in high school: you write the most critical elements of the story in the first few paragraphs, and then use subsequent paragraphs to fill in more details. Those that want the basic story can read only a bit, and those who want all details can read from start to finish. ODD presents information about the model in the same hierarchical sequence.

This is a post for another day, but the vast majority of computational models that I encounter are not adequately communicated by their authors. Without getting into why this might be the case, I want to note how profoundly problematic this trend is for the scientific process. Science is built upon the premise that all work should be replicable: the only way to confirm a scientific finding is to have it independently replicated multiple times. If a model is not adequately described when its results are published, it is useless to science, because no one will be able to replicate the work. For this reason I am committed to making sure that the fieldTest model is comprehensively described in our upcoming paper.

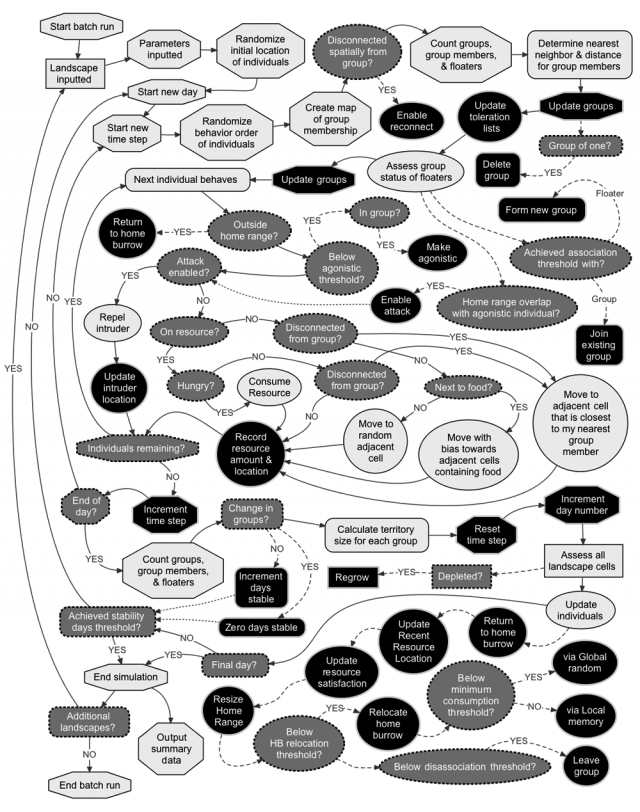

One of the best ways to portray the basic structure of the model is a sequencing diagram that describes exactly what algorithms and processes are run in relation to each other as each simulation run proceeds. These sequencing diagrams are really just a specialized version of a concept map. I just completed a sequencing diagram for fieldTest using VUE:

In making this diagram I constrained myself to what would be a figure taking up an entire regular-sized piece of paper, leaving a little room for a figure legend. This proved to be challenging, as in the past I just let my concept maps sprawl all over the workspace because they were part of my process rather than being a product. The diagram above is meant to be included as part of our paper (probably in the Supplementary Material), so it needs to use space efficiently. It took a lot of fiddling around to get all the nodes and connectors to fit together into the rectangular space I allowed myself: a lot like doing a puzzle or packing a vehicle before a big trip. As I have discussed before, good concept maps create a meaningful geography of ideas, and in this case the spatial representation of different processes had to make the model’s operation crystal clear to the viewer. Although I had to do a lot of criss-crossing across horizontal space, I am pretty proud of how “followable” the diagram turned out to be. Notice how there is only one set of crossed connectors despite all the complexity of the diagram (and this crossing was necessary due to the combinatorial nesting of contingencies in one particular part of our IBM algorithm).

I also tried to use best practices for information design in my diagram. Different node fills represent different components of the model: light gray is for processes, dark grey (with dashed outlines) is for contingencies, and black was for variable updates. Different node shapes represent different scales: octagons are for the global scale, hard rectangles for the landscape scale, rounded rectangles for the group scale, and ellipses for the individual scale. The solid connectors show the main flow of the program’s sequence, whereas the dashed lines show sub-algorithms performed along this main sequence. Dotted connectors show how updates influence contingencies further down the program’s sequence. I chose not to use color, as it is likely that this paper will be published in a journal where color printing will not be an option for us. Color also was not necessary, as variations in gray-scale were adequate to represent the variation in the information that I had to present.

I also used layers to separate out some of the major submodels of fieldTest, but you will not be able to see these in the image above. If you are interested in seeing the actual VUE file and how it uses layers to make sense of the programming architecture of fieldTest, contact me.

What I have been doing is, admittedly, the opposite of what I should have done. Currently I am reading through code in order to interpret that code via a concept map. In an ideal world I would have started the project with a concept map, which would have then been used to write appropriate code. Realistically, what actually probably can happen is that the concept map comes first but in an incomplete form, and then code and concept map are updated in an iterative process. But writing the program and never mapping until it is done is a definite no-no, and I will not be doing another model this way again (in my defense, I was not an accomplished concept-mapper back in 2008 when this project started).

This is just a working draft of the fieldTest model sequencing diagram; I am sure that it will change in the process of editing our paper, but I figured it would be interesting to put up in this form.

A Major Post, Behavioral Ecology, Competition, Concept Mapping, Department of Mathematics & Science, Ecological Modeling, Group Territorial Behavior, Individual-based Models, Information Design, Spatially Explicit Modeling